Nidhi Vichare

VP of Data, AI & Platform Architecture · Board Advisor

I lead enterprise AI from strategy to production. The org, the architecture, the operating model, the measurement. Every technology selected, every team built, every dollar measured with causal frameworks, not dashboard correlations. Built the platforms behind $50B+ in enterprise revenue.

I built the platforms behind

$50B+ in enterprise revenue

From Samsung's billion-dollar ad attribution engine to Cisco's 70-petabyte governance framework.

I architect where data becomes measurable business value.

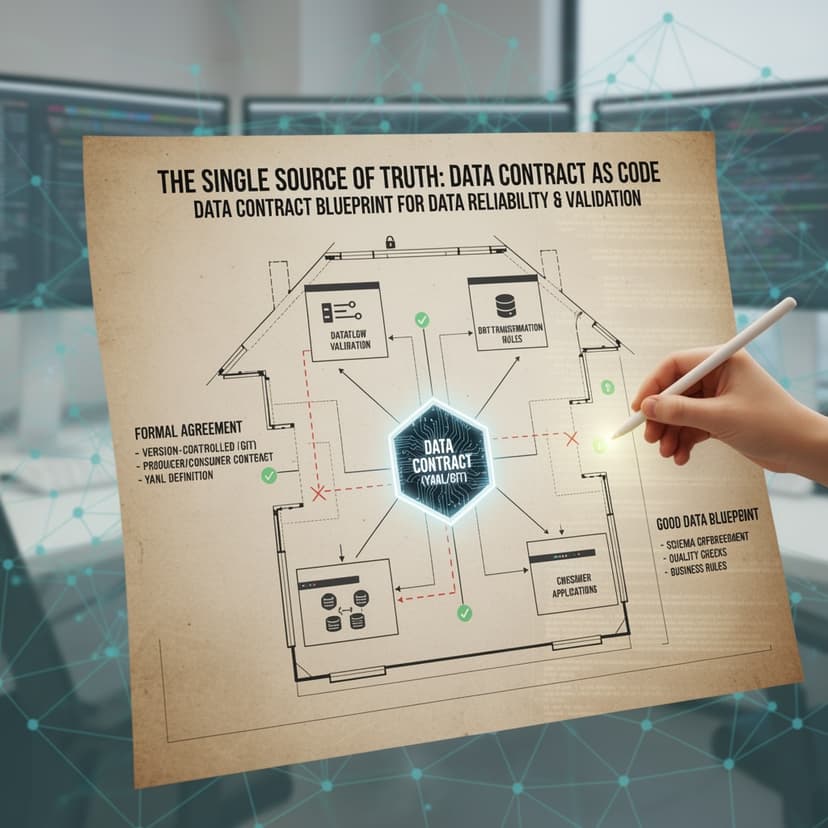

Enterprise data & AI platforms built from scratch. Every technology selected, every team built, every dollar measured. From first pipeline to billion-dollar attribution.

Unlocking data and AI,

one essay at a time.

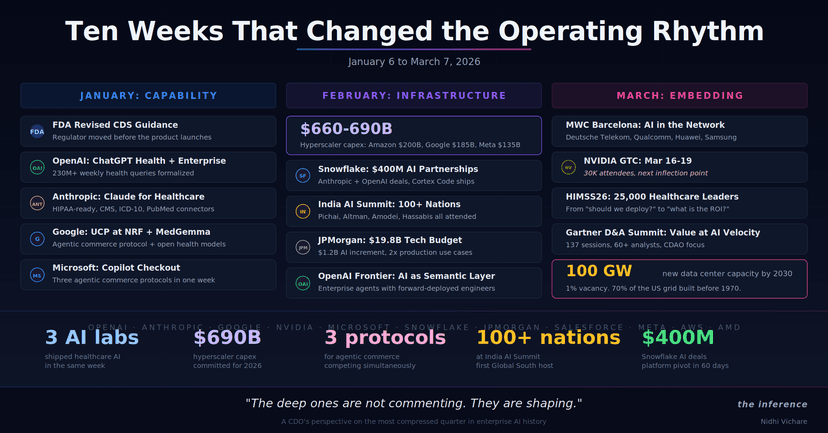

A running collection of essays on applied enterprise AI. The architecture, measurement, and leadership calls that determine which AI programs actually work. Written for senior data and AI leaders making the decisions that do not make the slides.

Catalog strategy. Causal measurement. Agent architectures. And the craft of senior leadership in a year where every slide has AI on it.

46 essays · Enterprise AI strategy, data architecture, and leadership.

Nidhi Vichare is a Chief Data and AI Officer, enterprise AI architect, and data platform executive. She writes about enterprise AI strategy, data architecture, causal measurement, AI ROI, agentic systems, and modern leadership for senior data and AI leaders.

Enterprise-scale systems I've designed and shipped.